Civilization Needs Light

The true measure of the degree of civilization is how much light it casts into outer space. When viewed from space, the large cities are lit up like a Christmas tree all over the face of the Earth. That’s how we know civilization exists on Earth.

Edison’s Incandescent Bulb

Invention = 99% Perspiration + 1% Inspiration

Thomas Edison once commented that being prolific in any field, including inventing things, required a simple formula. He worked hard at his inventions, slaving for weeks to find solutions to common problems, until the proverbial light bulb came on. His inspiration and his tenacity can be an inspiration for us all. We just need to keep trying and when we have an epiphany, voila – the solution.

Passing Electrons

When electrons pass through a conductor (or more correctly through a partial conductor), heat and light can be generated. Edison experimented tirelessly with different conductors and with different containment gases. The filament in his bulb eventually “burned out” due to the heat’s breaking down the structure of the filament material, but his efforts finally brought us to the familiar screw-in socket in use throughout the civilized world.

AC versus DC

Many of Edison’s numerous inventions relied on delivery of electrons to homes and businesses. One of the great debates of the day was over transmission of electricity, either by direct current (DC) or by alternating current (AC). As you will see later, Edison, it turned out, was right after all. The advantage of alternating current is that it can be sent longer distances over copper wires than direct current can be. The physics of it is somewhat muddled these days, when extremely high voltages are considered, but up to a few hundred kiloVolts are more efficiently transmitted long distances because of losses to heating in the transmission wire itself.

Fluorescent Lighting

When certain gases are stimulated with AC, they heat up and glow. The light given off can be quite bright. In fact, the consumption of electrical power for a given lumen power is generally more efficiently done using fluorescent technology than incandescent technology. We won’t get into the physics of the mercury vapor causing the phosphor coating to glow, but you can read about it at Sylvania.com if you’re interested.

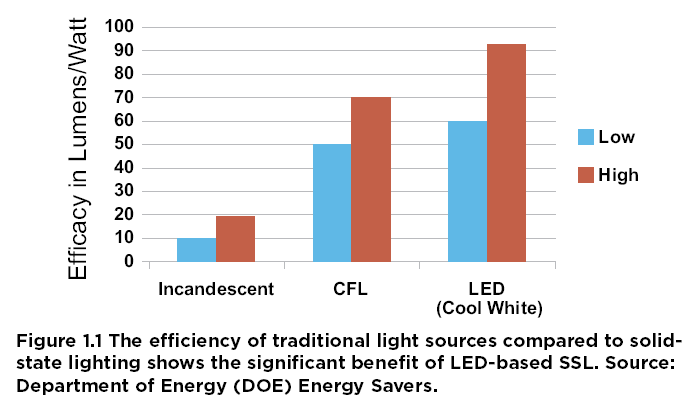

The main point about fluorescent technology is that it is more efficient and in recent years has been developed into compact fluorescent bulbs, which have been designed to screw into Edison’s old fixtures. Efficiencies of 4x to 5x incandescent bulbs are common. But, material costs are higher for CFL bulbs. The manufacturing costs are higher, but over the lifetime of a bulb the energy cost to light it can simply swamp the purchase price out.

Lifetime Cost

If a bulb burns 24×7, then in a year a 10W bulb will consume 87,600 W-hr of energy. While a 60W bulb will consume 6x that. At 10 cents/kW-hr, the CFL 10W with the same lumen output as a 60W standard lightbulb will burn only $8.76 versus $52.56. The $10 CFL cost versus $2 incandescent cost of the two bulbs is swamped out by the energy consumption cost. (You can read more at GE’s website – yes, that’s Edison’s company.)

Moreover, the incandescent bulb may be rated to last an average of 3,000 hours, while the CFL may be rated to last 30,000 hours. So, during that year the incandescent bulb may have to be replaced twice or more, while the compact fluorescent may not need to be replaced for another 2-1/2 years. Even the purchase cost will favor the longer-lasting CFL bulbs.

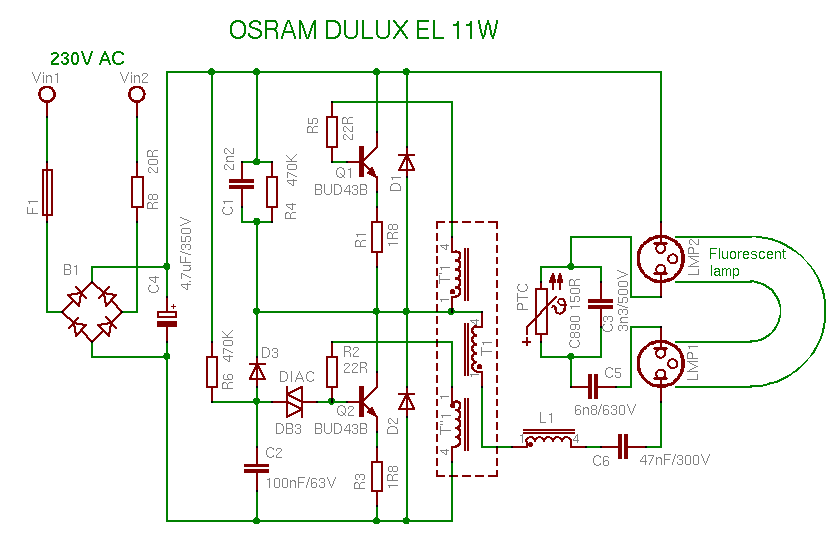

There is an oddity about CFL bulbs for the inquisitive minds. AC (120 or 240 VAC) is delivered to the base of the bulb so that it can screw into a standard lighting fixture, but inside the bulb the AC is first converted to DC (for you EEs, see the bridge). This may seem particularly odd since that DC is then re-converted to AC but at a much higher voltage. To start the gas in the tube fluorescing you may need to apply up to 900 VAC across the tube. Once it has fired off, the voltage is still AC but can be reduced to a few hundred volts.

So, CFLs and the 4-foot long fluorescent lights hanging overhead in factories and Wal-Mart aren’t all that different on the inside, except that the ballast common in standard fluorescent lighting is handled by electronic circuitry inside the CFL. Fluorescents also have a couple of drawbacks.

- The floor lamp with 2 settings, say, 25W and 100W, doesn’t exist in CFL. The bulb is either ON or OFF, no dual Wattage bulbs.

- Dimming influorescents is more complex (and costly). Special CFLs can be dimmed, but it’s not like the incandescent dimming.

Along came Holonyak

What LED to more efficient Lighting?

Once again a GE inventor discovered the ability to light things but this time using semiconductor diodes. These devices have evolved greatly in the last decade to the point that Light Emitting Diodes (LEDs) are practical.

You may have seen small flashlights powered by AAA cells that are extremely bright. The “bulbs” in these flashlights are actually LEDs, sometimes 5 to 10 of them (or more on larger units). LEDs run off DC, not AC, and the efficiency of these in terms of lumens per Watt of electrical power is simply shocking!

LEDs seem to be averaging 46 lumen/Watt of power, which puts them head to head with CFL. Advantageously, LEDs operate off DC voltage at much lower supply levels, i.e. around 3 VDC or so. The actual junction of a semi-conductor diode has only a 0.7 V drop across it.

Even with a lot of current running through it, the voltage across the diode is still under 1 Volt. Most LED modules (incorporating the actual diodes surrounded by other circuitry) can operate on less than 43 Volts DC. They may need several Amperes of operating current to produce lots of lumens, but the more dangerous 120 or 240 VAC is not needed to operate the module.

The reason both LEDs and CFLs are designed to operate off standard 120/240 VAC is for compatibility. Standard house current in residences and businesses around the world are all either 120 VAC or 240 VAC (nominally). We simply don’t have a 5 VDC or 12 VDC bus running around the house that you might find inside a computer. Neither do we have houses or businesses wired for any other standardized DC voltage.

LEDs can more easily solve the 2-setting bulb issue and the dimmer issue. By illuminating only a portion of the array of LEDs the light output can easily be reduced. For the common Edison base bulb with 2 connections, one for the low Wattage filament and one for the high, the LED equivalent can have some LEDs connected to the low-Wattage contact and the remainder to the high-Wattage contact – simple. Dimming with LEDs is a little more complex but far easier to solve than for CFLs and with greater precision.

The Sun Illuminates the Problem

Photovoltaic cells generate DC from being placed in sunlight. The amount of current is related to the density of light hitting the surface of the PV cell. So, when the angle of the sun, as it traverses across the sky, changes the angle of light hitting the solar cell, the current produced changes. The maximum current is when the sun is aligned directly above the plane of the cell.

Daily the sun changes the angle left-right to the solar cell as it rises in the morning and sets at night, but the angle top-bottom to the solar cell also changes with the seasons. Ever notice the “figure 8” on a globe? This is trying to describe the angle in the sky (from north to south) of the sun, month by month. In summer the sun is more directly overhead than in winter, when it comes in at a lower angle to the ground.

This phenomenon has to do with the 22.5 degree angle that the Earth rotates on, and it gives us the seasons. Placing a solar cell panel flat on a roof may seem logical but the daily angle changing and the seasonal angle changing will not result in good performance from the cell.

If you could change the angle daily and seasonally, the performance would be maximal (except for weather). But mechanical means to move a panel consume energy and are prone to failure, compared to a fixed bracket on the roof or side of a building.

The energy is also produced when the sun is out, not necessarily when you need it. During the day you may not be home. Nighttime rolls around and you go home to a dark house. Now you need the energy from the sun to power your lights. So, in addition to producing energy from PV “solar” cells you need to be able to store the energy for later use.

Selling Electricity to Your Neighbors

If you did invest in solar cells to produce electricity, you could “co-generate” and send energy in AC form back over the distribution grid to places where it’s needed. When you left home for work, your workplace probably needs electricity to run the lights there and power the computers and other equipment that business runs on. And current thinking is that we simply don’t store energy anywhere in the system.

Who Gives a Dam

Yes, hydro-electric plants (dams) store energy by not releasing the water, but no one runs pumps in reverse to put the water back behind the dam. Yes, there are some giant capacitor banks in the electric grid to handle inductive loads, but they store only milliseconds of energy. Your house is still tied to that grid and needs to have the dam releasing water when you go home, or burn some natural gas to power a turbine, or boil some water for our steam-powered nuclear turbines.

Storage is Simple with DC, not AC

Maybe from this you can tell, but to be explicit: DC can be stored in batteries, while AC must be converted to DC first to store it and then re-converted to AC to use it. Lots of DC storage devices exist. Hundreds of millions of 12 VDC batteries have been manufactured for automobiles. Perhaps some other voltage may be appropriate for storage of locally co-generated electricity, like 48 VDC or 6 VDC, but the storage technology is well known.

Edison carried on, as previously mentioned, a campaign to have DC used for household appliances and lighting. The issue was resolved in favor of Tesla, making transmission lines and wiring inside buildings all alternating current (AC). For modern day electronics this is a poor choice. After all, Edison may have been correct. Hindsight is often 20-20.

Conversion from AC to DC (or DC to AC) generates heat from the lost power. Transformers heat up from internal resistance and current-carrying wires heat up, too. A 10% loss for high tension lines may seem acceptable, but when you’re dealing with megaWatts of power, the amount of heat generated along the length of the high tension line is enormous.

- AC is the mode of choice for magnetic equipment, like motors

- DC is the mode of choice for modern electronic devices and now lighting, too

We pay for that heating of wires in higher costs for electricity. If a source of electric power is 20 miles away, versus 20 feet, and the current flow heats up the wire all along the distance, which source makes more sense in the overall picture?

New Concept Saves a Para-dimes

Saving money and not heating up wires or converter boxes just makes sense for DC-powered devices. Our new paradigm of local DC power in houses and buildings can save quite a few dimes. With PV “solar” cells charging local batteries (and possibly other sources like wind) the energy generated when the sun is up or the wind is blowing. We use it from the storage batteries as needed, but we don’t transport it miles away over lossy lines into a network.

We still may need a network for backup power and we still prefer AC for motors and other electro-magnetic uses. Local conversion of stored DC for AC motors is a process less efficient than taking AC and down-converting the voltage for the motor. DC motors do exist, but they are somewhat noisy (electrically) compared to AC and not quite as efficient, plus their brushes tend to wear out faster than those on AC motors.

Besides, every paradigm shift need a path to go from A to B, i.e. we need to be able to retrofit all the houses and buildings to operate on DC with new low voltage wiring and the existing stock of lighting fixtures and bulbs need to be accommodated in the changeover process. The shift needs to be as painless as possible. Changing from gasoline to propane or from gasoline to electric would be equally challenging.

First step: add new DC wiring to homes, maybe 12 V, 24 V or 5 V. This wiring would be overlaid onto the existing structure. It would have to terminate in a service area where we can add a new battery bank.

Second step: add solar cells to power the battery bank plus control circuits. Solar cells are currently a pretty penny (per Watt, that is). Perhaps in volume they’ll come down a lot to make the payback period less than a year, but right now the investment would take years to recoup.

Third step: change fixtures and devices to utilize the DC power now installed. Every time an incandescent bulb burns out we should consider the choice of replacing it with LEDs, maybe even running the fixture off DC instead of AC. In other words, re-wiring each lighting fixture as its old bulb retires. Of course, wholesale replacement may make sense economically if the payback is short enough. Slowly, over time all the lighting fixtures would be replaced and rewired to work on the DC overlaid wiring. The AC wiring never really goes away until motors can be re-created for our DC world.

Fouth step: solar cells would probably need more umph. Adding more on the roof might require reinforcement. We’ll also need to add capacity to the battery bank, if it wasn’t large enough to begin with. At some point other energy generating methods would look attractive to add on, such as wind-turbine generation, fuel cells and the like.

Does the connected grid make sense now? Should we have a massive power grid with huge generators of electrical power that send current using AC down from nuclear plants or hydro-electric plants for scores of miles to transformers that buzz and heat up where the electricity is converted for local use in a house that re-converts the AC into DC for powering flatscreen TVs and LED lighting?

Or, should we try to co-generate DC locally using solar cells and storing the energy in local batteries and power those electronic devices and LEDs directly with the DC?